Foreword

10 years ago (in 2009), UC Berkeley published a paper on Cloud Computing on February 10. Looking at it today, 10 years later, it is still quite inspiring.

Cloud Computing is likely to have the same impact on software that foundries have had on the hardware industry.

The impact of cloud computing on software is like the impact of foundries on the hardware industry.

1. Why is Cloud Computing the Future?

What is Cloud Computing?

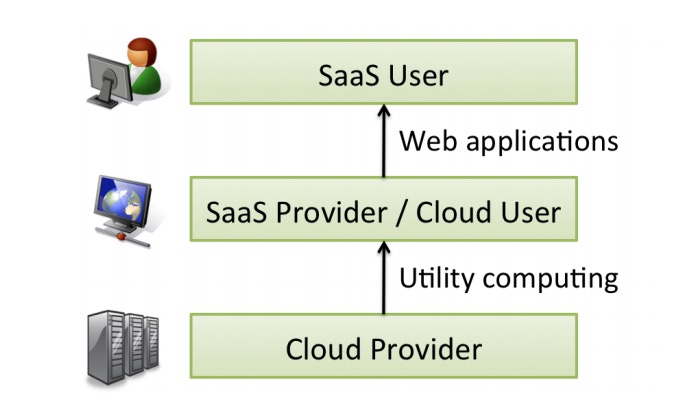

Cloud Computing refers to both the applications delivered as services over the Internet—the services in Software as a Service (SaaS)—and the hardware and systems software in the datacenters that provide those services, the so-called Cloud:

Cloud Computing refers to both the applications delivered as services over the Internet and the hardware and systems software in the datacenters that provide those services.

When a cloud is made available in a pay-as-you-go manner to the general public, we call it a Public Cloud, which offers Utility Computing services. Accordingly, the datacenter internal to an enterprise or organization, which is not available to the public, is called a Private Cloud.

P.S. Utility Computing is a service provisioning model in which a service provider makes computing resources and infrastructure management available to the customer as needed, and charges them for specific usage rather than a flat rate.

Corresponding to user roles, the relationship is shown in the following figure:

[caption id="attachment_1992" align="alignnone" width="686"] cloud computing roles[/caption]

cloud computing roles[/caption]

P.S. Of course, SaaS providers can also simultaneously be SaaS users.

The Revolution Sparked by Cloud Computing

The key advantage of cloud computing lies in the elasticity of resources. The cost of using 1000 servers for 1 hour is no more than using 1 server for 1000 hours. This elasticity of resources is unprecedented:

Moreover, companies with large batch-oriented tasks can get results as quickly as their programs can scale, since using 1000 servers for one hour costs no more than using one server for 1000 hours. This elasticity of resources, without paying a premium for large scale, is unprecedented in the history of IT.

Cloud computing also allows application providers to deploy their products as SaaS without needing to own their own datacenters, just as the emergence of semiconductor foundries gave chip companies the opportunity to design and sell chips without owning a fab:

Just as the emergence of semiconductor foundries gave chip companies the opportunity to design and sell chips without owning a fab, Cloud Computing allows deploying SaaS—and scaling on demand—without building or provisioning a datacenter.

Analogous to how SaaS allows users to offload some problems to the SaaS provider, cloud computing allows the SaaS provider to offload some of their problems to the cloud computing vendor:

Analogously to how SaaS allows the user to offload some problems to the SaaS provider, the SaaS provider can now offload some of his problems to the Cloud Computing provider.

On the other hand, from a hardware perspective, the changes brought by cloud computing are:

-

The illusion of infinite computing resources available on demand.

-

The elimination of an up-front commitment by cloud users.

-

The ability to pay for use of computing resources on a short-term basis as needed.

This allows companies to start with a very small investment and increase hardware resources only when actually needed, thereby improving resource utilization. At the same time, the on-demand allocation/release approach is also conducive to saving resources.

2. Why 2009?

The key enabler of cloud computing was the construction and operation of extremely large-scale, commodity-computer datacenters at low-cost locations, where the cost of electricity, network bandwidth, operations, and software is very low, thereby achieving economies of scale:

We argue that the construction and operation of extremely large-scale, commodity-computer datacenters at lowcost locations was the key necessary enabler of Cloud Computing, for they uncovered the factors of 5 to 7 decrease in cost of electricity, network bandwidth, operations, software, and hardware available at these very large economies of scale.

In fact, since the rapid growth of Web services in the early 2000s, by 2009 some giant Internet companies, such as Amazon, eBay, Google, and Microsoft, already possessed datacenters of considerable scale:

Building, provisioning, and launching such a facility is a hundred-million-dollar undertaking. However, because of the phenomenal growth of Web services through the early 2000’s, many large Internet companies, including Amazon, eBay, Google, Microsoft and others, were already doing so.

At the same time, these companies also had to build scalable software infrastructure (such as MapReduce, Google File System, BigTable, Dynamo, etc.), as well as professional operations and security protection mechanisms.

In addition to the necessary hardware and software infrastructure for operating large commodity datacenters, new technology trends and business models were also key drivers. On the other hand, once cloud computing took off, new application opportunities and usage models were discovered, which similarly promoted the development of cloud computing.

New Technology Trends and Business Models

The emergence of Web 2.0 meant a shift from high-touch, high-margin, high-commitment services to low-touch, low-margin, low-commitment self-service. For example:

-

PayPal's emergence allowed individuals to collect payments via credit cards without contracts and long-term commitments, with only small pay-as-you-go transaction fees.

-

Amazon CloudFront supported individuals in publishing Web content without establishing relationships with content delivery networks.

-

Google AdSense enabled personal web pages to generate advertising revenue without needing to establish relationships with ad display companies.

P.S. High-touch refers to a service process requiring more human interaction, rather than through vending machines, self-service counters, etc.

On the technology front, the emergence of virtual machines allowed customers to choose their own software resource stacks, lowering costs through shared hardware without interfering with each other.

New Application Opportunities

Cloud computing presents excellent opportunities for these types of applications:

-

Mobile interactive applications: High availability requirements and reliance on large-scale datacenters for large datasets.

-

Parallel batch processing: The computing resources required for big data batch processing and analysis.

-

The rise of analytics: Business analytics (such as understanding customers, supply chains, purchasing habits, rankings, etc.) also require massive computing resources.

-

Scaling compute-intensive desktop applications: Mathematical software like Matlab also relies on computing resources.

In addition, for some "Earthbound" applications temporarily unable to move to the cloud due to constraints like data migration costs and latency, they might also enjoy the benefits of cloud elasticity and parallelism once wide-area data transfer costs and latency decrease.

3. Utility Computing

Different utility computing offerings can be distinguished based on the level of abstraction presented to the programmer and the level of management of the resources:

Our view is that different utility computing offerings will be distinguished based on the level of abstraction presented to the programmer and the level of management of the resources.

For example, several cloud products at the time virtualized resources like compute, storage, and networking to varying degrees:

-

Amazon EC2: Provided cloud VMs, like physical hardware, where users could control the entire resource stack.

-

Google AppEngine: Provided an application-oriented runtime environment.

-

Microsoft Azure: Provided a .NET runtime environment, sitting between the former two.

However, from the perspective of cloud vendors and users, these utility computing products represent trade-offs among developer ease-of-use, flexibility, and portability, each with its own suitable scenarios.

4. Cloud Computing Economics

The fine-grained economic models enabled by cloud computing make trade-off decisions more fluid, and in particular, the elasticity offered by clouds serves to transfer risk:

We argue that the finegrained economic models enabled by Cloud Computing make tradeoff decisions more fluid, and in particular the elasticity offered by clouds serves to transfer risk.

Typically, utility computing is superior to private clouds in two scenarios:

-

The demand for a service varies over time. For example, dealing with demand peaks.

-

Demand is unknown in advance. For example, a product suddenly becoming popular or a large number of users suddenly leaving.

In the first scenario, from a cost-benefit perspective, the inequality should be:

UserHours of cloud × (revenue − Cost of cloud) ≥ UserHours of datacenter × (revenue − Cost of datacenter / Utilization)

That is, comparing the expected profit of using cloud computing with the expected profit of using a datacenter considering average utilization:

UserHours * (Hourly Revenue - Cloud Cost) >= UserHours * (Hourly Revenue - Datacenter Cost / Utilization)

P.S. If utilization is 100%, both sides are equal. Even if possible, the service would be unavailable because datacenter usable capacity is generally 0.6 to 0.8.

For example, if a service requires 500 servers during a daytime demand peak but only 100 machines at night, the average daily usage is 300 machines, totaling 300 * 24 = 7200 hours. But to handle the peak, it costs 500 * 24 = 12000 service hours, about 1.7 times the actual need. Therefore, as long as the cost of using cloud services for 3 years (assuming a 3-year depreciation) is less than 1.7 times the cost of purchasing servers, funds can be saved through utility computing.

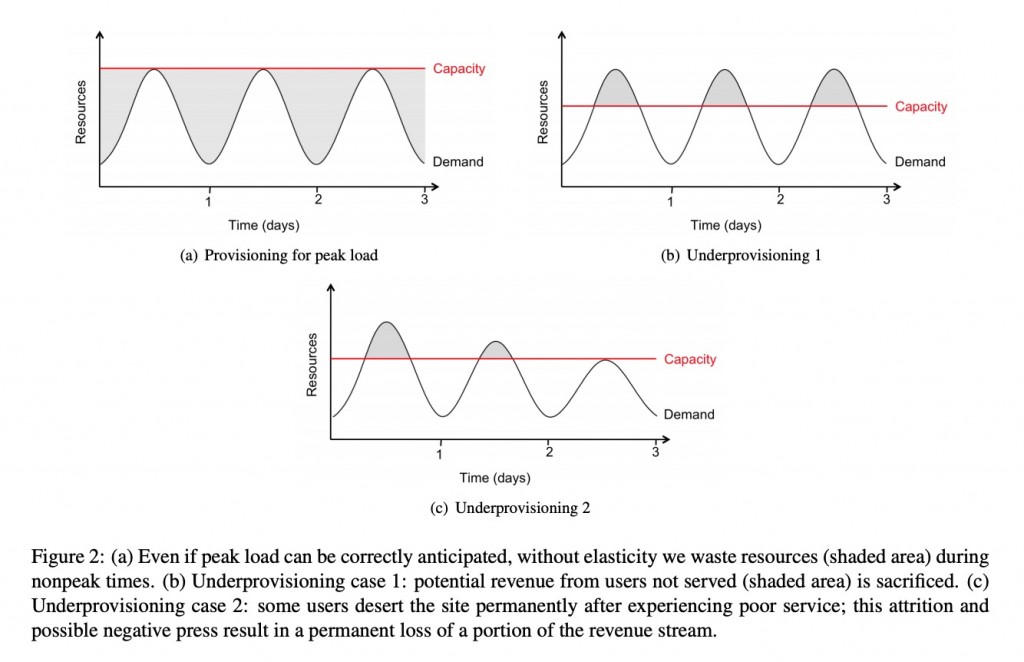

In reality, datacenter server utilization is generally only 5% to 20% because peak demand typically exceeds the average by 2 to 10 times, and the only way to handle peak demand is by provisioning resources in advance. But not all demand peaks can be predicted in advance, such as demand bursts triggered by news events; at this time, the resource elasticity provided by cloud computing is particularly important.

Beyond cost factors, the risk transfer capability is also an important value of the cloud computing economic model. Once the peak load exceeds service capacity, economic losses occur, as shown below:

[caption id="attachment_1993" align="alignnone" width="625"] peak load risk[/caption]

peak load risk[/caption]

Not only is the potential revenue from a portion of users lost, but some users may leave permanently due to poor experience.

Other cost risks can also be avoided through cloud computing, such as:

-

Depreciation losses incurred from disposing of excess servers when scale is reduced due to unexpected reasons like business slowdowns.

-

Increased costs associated with hardware and software upgrades.

The pay-as-you-go short-term rental model can reduce the usage costs for cloud users, while cloud vendors with strong purchasing power can fully utilize economies of scale to achieve profitability. For cloud users, they can quickly feel the changes in resource costs through pricing, such as immediately enjoying the savings from reduced hardware costs.

5. Top 10 Obstacles and Opportunities for Cloud Computing

| Obstacle | Opportunity |

|---|---|

| Availability of Service | Use Multiple Cloud Providers; Use Elasticity to Prevent DDOS |

| Data Lock-In | Standardize APIs; Compatible Software to Enable Surge Computing |

| Data Confidentiality and Auditability | Deploy Encryption, VLANs, Firewalls; Geographical Data Storage |

| Data Transfer Bottlenecks | FedExing Disks; Data Backup/Archival; Higher Bandwidth Switches |

| Performance Unpredictability | Improved VM Support; Flash Memory; Gang Schedule VMs |

| Scalable Storage | Invent Scalable Store |

| Bugs in Large Distributed Systems | Invent Debugger that Relies on Distributed VMs |

| Scaling Quickly | Invent Auto-Scaler that Relies on Machine Learning; Snapshots for Conservation |

| Reputation Fate Sharing | Offer Reputation-Guarding Services like those for Email |

| Software Licensing | Pay-for-Use Licenses; Bulk Use Sales |

P.S. The first three are technical obstacles to adopting cloud computing, the middle five are technical obstacles to the growth of cloud computing, and the last two are policy and business obstacles to adopting cloud computing. The corresponding opportunities on the right are anticipated solutions.

6. Vision

The long dreamed vision of computing as a utility is finally emerging.

The era of cloud computing has arrived.

It is expected that cloud vendors can sell their computing resources on a pay-as-you-go model, profiting through resource multiplexing. Cloud users can save the high costs of building their own datacenters while freeing themselves from the risks of resource over/under-provisioning.

At the same time, developers should focus on horizontal scaling of VMs to support deployment in cloud environments. Specifically:

-

Applications Software: Needs to support both scaling up and down rapidly, including adding/removing resources like CPU and memory, and requires a pay-for-use licensing model.

-

Infrastructure Software: Needs to be aware that it is no longer running on bare metal but on VMs, and should have built-in billing.

-

Hardware Systems: Should be designed at the scale of a container (at least a dozen racks), which will be the minimum purchasing unit. Operating costs must match performance and purchase costs, while also considering energy efficiency, allowing idle resources to enter low-power modes. Processors should work well with VMs, flash memory should be added to the memory hierarchy, and LAN switches and WAN routers need improved bandwidth and cost efficiency.

7. Inspiration

From a resource perspective, the revolution brought by cloud computing primarily lies in lowering resource usage costs and improving resource utilization.

Benefiting from economies of scale, centrally managed computing resources have lower purchasing and operating costs, and can share and multiplex hardware through virtualization technology, further reducing resource usage costs.

The improvement in resource utilization is reflected at 4 levels:

-

Machine Level: Can be quickly rented and released. The number of machines held approaches the number required by the application.

-

Resource Level: Different types of resources are provisioned on demand and are not bound to the hardware capabilities of physical machines. The rented resources fit the actual needs of the application.

-

Load Level: Virtualization technology allows sharing of hardware resources and fully utilizes CPU multi-core features. Hardware runs at full capacity, spinning furiously.

-

Lease Term Level: The resource elasticity brought by the pay-as-you-go short-term rental model changes the situation of low resource utilization during off-peak hours. Flexible allocation avoids paying for idle periods.

No comments yet. Be the first to share your thoughts.