Preface

The previous article Pros and Cons of SSR listed 6 major challenges of SSR rendering mode:

- Challenge 1: How to leverage existing CSR code to achieve isomorphism

- Challenge 2: Service stability and performance requirements

- Challenge 3: Construction of supporting facilities

- Challenge 4: The money problem

- Challenge 5: Performance loss of hydration

- Challenge 6: Data requests

These problems are the main reasons why SSR has always been far less widely used than CSR. But today, Serverless, low-code, and 4G/5G network environments—three major opportunities—have brought new turning points for SSR, making it the right time to land and flourish.

First Major Opportunity: Serverless

Serverless computing hands over all server-related configuration and management tasks to cloud providers, reducing users' burden of managing cloud resources.

For cloud computing users, Serverless services can (automatically) scale elastically without explicitly provisioning resources, not only eliminating the burden of managing cloud resources, but also enabling pay-as-you-go billing. This characteristic largely solves "Challenge 4: The money problem":

Introducing SSR rendering service actually adds a layer of nodes to the network structure, and wherever high traffic passes, every layer costs money.

Moving component rendering logic from client to server execution will inevitably increase costs, but it's expected to minimize these costs through Serverless.

On the other hand, the key to Serverless Computing is FaaS (Function as a Service), providing conventional computing capabilities through cloud functions:

Run backend code directly without worrying about servers and other computing resources, as well as service scalability, stability, and other issues. Even supporting facilities like logs, monitoring, and alerts are ready to use out of the box.

That is to say, feed FaaS a JavaScript function, and you can launch a highly available service, without worrying about how to handle high traffic (tens of thousands of QPS), how to ensure service stability and reliability... Sounds somewhat epoch-making, right? In fact, AWS Lambda, Alibaba Cloud FC, and Tencent Cloud SCF are already mature commercial products, and can even be tried for free.

Stateless template rendering work is especially suitable for cloud functions (input React/Vue components, output HTML) to complete. "Challenge 2: Service stability and performance requirements"—the most critical backend professional problem is easily solved. The technical challenges SSR faces are reduced from a highly available component rendering service to a JavaScript function:

Compared with client-side programs, server-side programs have much stricter requirements for stability and performance, for example:

- Stability: abnormal crashes, infinite loops (to be solved by frontend personnel themselves)

- Performance:

memory/CPU resource usage(solved by FaaS infrastructure), response speed (network transmission distance, etc. must be considered)

How to handle high traffic/high concurrency, how to identify failures, how to degrade/quickly recover(solved by FaaS infrastructure), which links need caching, how to update cache...

FaaS infrastructure solves most performance and availability problems. Stability problems within functions can be solved through pure frontend means. As for the remaining response speed and cache/cache update problems, another cloud computing concept needs to be introduced—edge computing.

Edge Computing

So-called edge computing is to distribute computation and data storage to nodes (CDN) closer to users (or called edge servers), saving bandwidth while responding to user requests faster:

Edge computing is a distributed computing paradigm that brings computation and data storage closer to the location where it is needed, to improve response times and save bandwidth.

(Excerpted from Edge computing)

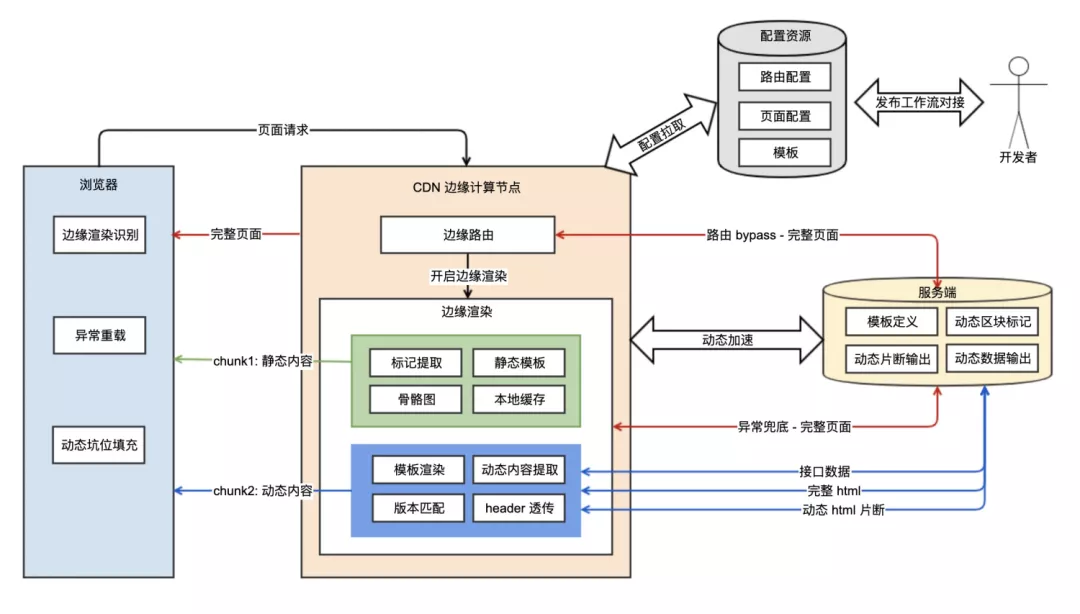

Just like traditional CDNs accelerate resource access by shortening the physical distance between static content and end users, while reducing the load on application servers, CDNs supporting edge computing allow cloud functions to be deployed to edge nodes, accelerating service response. At the same time, relying on CDNs to easily control caching strategies, it can even achieve dynamic-static separated edge streaming rendering (ESR):

P.S. For more information about SSR based on edge computing, see Frontend Performance Optimization: When Page Rendering Meets Edge Computing

Second Major Opportunity: low-code

If FaaS solves the core service availability problem of SSR landing, giving SSR wings, then low-code is the runway that allows SSR to soar to the sky.

Because low-code almost solves all other challenges:

- Challenge 1: How to leverage existing CSR code to achieve isomorphism

Challenge 2: Service stability and performance requirements- Challenge 3: Construction of supporting facilities

Challenge 4: The money problem- Challenge 5: Performance loss of hydration

- Challenge 6: Data requests

Problems difficult to solve in source code development mode have different dimensional solutions in low-code mode, just like solving algebraic problems through geometric methods.

Challenge 1: How to leverage existing CSR code to achieve isomorphism

To make existing CSR code run on the server, many problems must be solved first, for example:

- Client dependencies: divided into API dependencies and data dependencies, such as JS APIs like

window/document, device-related data information (screen width/height, font size, etc.)

- Lifecycle differences: For example, in React,

componentDidMountis not executed on the server.

- Asynchronous operations not executed: Server-side component rendering process is synchronous,

setTimeout,Promise, etc. cannot be waited for.

- Dependency library adaptation: React, Redux, Dva, etc., and even third-party libraries with uncertainty about whether they can run in universal environment, whether state needs to be shared across environments. Taking state management layer as an example, SSR requires its store to be serializable.

- Shared state on both sides: Every piece of state that needs to be shared must consider how to pass (server-side) and how to receive (client-side).

First, low-code mode is different from source code development. Existing CSR code cannot be directly migrated to the low-code platform. Second, the configuration-based development mode of low-code provides natural fine-grained logic splitting and complete fine-grained control, reflected in:

-

Fine-grained logic splitting: Each lifecycle function is configured independently.

-

Complete fine-grained control: Dependency libraries, lifecycle, asynchronous operations, and shared state are strictly controlled. The low-code platform has full control over the compile-time and runtime environments of the filled code.

Although client dependencies cannot be eliminated, they can be managed like side effects in functional programming, such as constraining them to specific lifecycle functions (componentDidMount) to execute only on the client side, avoiding affecting the server. Lifecycle differences can be made strongly perceived by users through the low-code platform, such as strengthening differences during editing and preview stages. For unsupported asynchronous operations, validation and prompts can be performed during the editing stage. As for dependency libraries and state sharing methods, the low-code platform can have full control, constraining them within the supported scope.

In short, low-code easily solves the thorny problems of how to constrain writing style and how to manage uncertainty in source code development mode.

Challenge 3: Construction of supporting facilities

The core part of SSR is the rendering service, but in addition, consider:

- Local development kit (validation + build + preview/HMR + debugging)

- Release process (version management)

A whole set of engineering facilities needs to be reconsidered in SSR mode.

These supporting facilities are problems SSR needs to solve, and low-code faces the same problems. Therefore, SSR can reuse the online R&D link support provided by low-code to a certain extent, only extending some of its links, reducing the cost of supporting facility construction.

Challenge 5: Performance loss of hydration

Components as a layer of abstraction, while providing engineering value such as modular development and component reuse, also bring some problems. Typically, interaction logic is bound to component rendering mechanisms, which is the fundamental reason SSR needs hydration:

After the client receives the SSR response, in order to support (JavaScript-based) interaction functions, it still needs to create a component tree, associate it with the HTML rendered by SSR, and bind related DOM events to make the page interactive. This process is called hydration.

That is to say, as long as we still rely on the component abstraction layer, the performance loss of hydration is unavoidable. In source code development mode, components are irreplaceable because there is no equivalent abstract description form. However, in low-code mode, its output product (configuration data) is also an abstract description form. If it can have the same expressiveness as components, it is completely possible to remove the component abstraction layer and no longer bear the performance loss of hydration.

On the other hand, for non-interactive (pure static display) and weak-interactive (static display with tracking/jump) semi-static scenarios, the low-code platform can also accurately identify them and avoid unnecessary hydration.

Challenge 6: Data requests

Server-side synchronous rendering requires sending requests first, and only starts rendering components after getting data. So there are 3 problems:

- Data dependencies must be stripped from business components

- Missing client public parameters (including header information like cookie that the client will carry by default)

- Different data protocols on both sides: The server may have more efficient communication methods, such as RPC.

In low-code development mode, data dependencies are entered in configuration form, naturally stripped. Client public parameters, data protocols, etc. can all be configured through the low-code platform, such as configuring HTTP and RPC protocols, automatically selected by environment.

Third Major Opportunity: 4G/5G Network Environment

In the early mobile era, offline H5 was the industry best practice, because online pages meant second-level loading times, and offline pages had a huge loading speed advantage.

But with the development of network environments, the loading speed advantage of offline pages is no longer a decisive factor (the great explosion of mini-programs is enough to illustrate the problem). The dynamic characteristics of online pages are receiving much attention, (SSR powerless) offline scenarios are becoming fewer and fewer, and SSR has more and more room to play.

No comments yet. Be the first to share your thoughts.