Preface

Read-Write Separation, Database Partitioning, Denormalization, Using NoSQL... If all these scaling methods are applied and data response is still getting slower and slower, is there any other solution?

Yes, add cache. Use cache layer to absorb uneven loads and traffic spikes:

Popular items can skew the distribution, causing bottlenecks. Putting a cache in front of a database can help absorb uneven loads and spikes in traffic.

1. Where to Add?

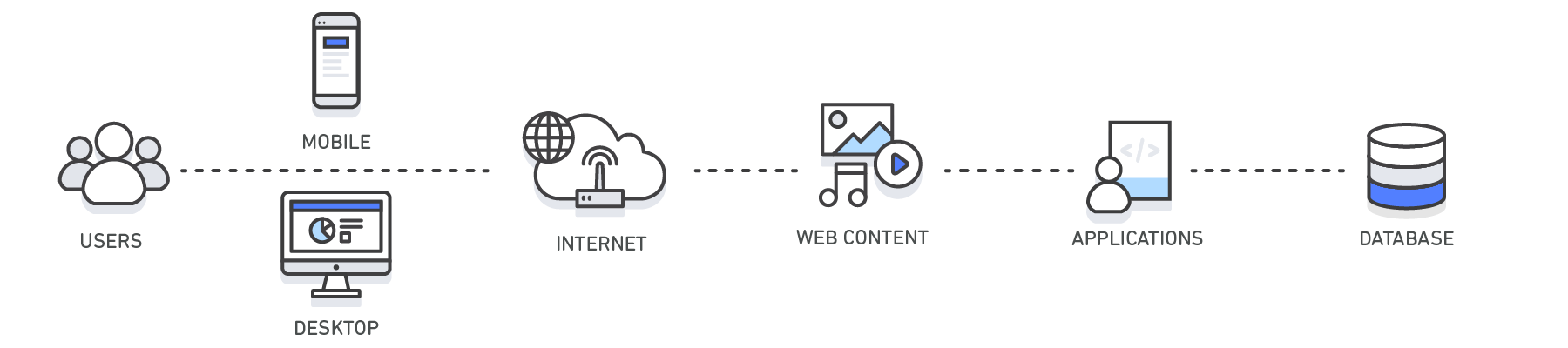

Theoretically, adding cache at any layer before the data layer can block traffic, reduce operation requests that eventually reach the database:

Divided into 4 types according to cache location:

-

Client-side cache: Including [HTTP Cache](/articles/http 缓存/), browser cache, etc.

-

Web cache: Such as CDN, Reverse Proxy Service, etc.

-

Application layer cache: Such as Memcached, Redis and other Key-Value Storage

-

Database cache: Some databases provide built-in cache support, such as query cache

To reduce database load, we add a key-value storage as a buffer layer between the application and data storage:

A cache is a simple key-value store and it should reside as a buffering layer between your application and your data storage.

Respond to some requests through data cached in memory, without actually executing database query operations, thereby improving data response speed

2. What to Store?

There are two common cache modes:

-

Cached Database Queries: Cache original database query results

-

Cached Objects: Cache data model objects in the application, such as reassembled datasets, or entire data model class instances

Cache Original Database Query Results

Generate key based on query statement, cache the database query results, for example:

key = "user.%s" % user_id

user_blob = memcache.get(key)

if user_blob is None:

user = mysql.query("SELECT * FROM users WHERE user_id=\"%s\"", user_id)

if user:

memcache.set(key, json.dumps(user))

return user

else:

return json.loads(user_blob)

The main defect of this mode lies in the difficulty of handling cache expiration, because there is no clear association between data and key (i.e., query statement), after data changes, it is difficult to precisely delete all related entries in the cache. Think about it, when a cell changes, which query statements will be affected?

Nevertheless, this is still the most commonly used cache mode, because compromises can be made, such as:

-

Only cache data directly associated with query statements, sorting, statistics, filtering and other calculation results are not stored

-

Don't pursue precision, delete all cache entries that may be affected

Cache Data Objects

Another approach is to cache data model objects in the application, so there is a logical association between raw data and cache, thereby easily solving the difficult problem of cache updates

Regardless of how data is queried, processed and transformed, only cache the final data model objects obtained. When raw data changes, directly remove the corresponding data object entirely

For applications, data objects are easier to manage and maintain than raw data, therefore, it is recommended to cache data objects, rather than raw data

3. How to Query?

There are 6 common cache data access strategies:

-

Cache-aside/Lazy loading: Reserved cache

-

Read-through: Direct read

-

Write-through: Direct write

-

Write-behind/Write-back: Write-back

-

Write-around: Bypass write

-

Refresh-ahead: Refresh

Cache-aside

In reserved cache mode, there is no direct relationship between cache and database (cache is located aside, hence called Cache-aside), the application reads required data from the database and fills it into the cache

Data requests prioritize the cache, only query the database when cache miss occurs, and cache the results, so the cache is on-demand (Lazy loading), only data that has been actually accessed will be cached

The main problems are:

-

When cache miss occurs, 3 steps are needed, delay cannot be ignored (for cold start, can manually preheat)

-

Cache may become old (generally forced update by setting TTL)

Read-through

In direct read mode, cache blocks in front of the database, the application does not directly interact with the database, but reads data directly from the cache

When cache miss occurs, the cache is responsible for querying the database and caching it itself. The only difference from reserved cache is that the database query work is completed by the cache, not the application

Write-through

Similar to direct read mode, cache also blocks in front of the database, data is written to cache first, then written to database. That is to say, all write operations must go through cache first

Generally combined with direct read cache, although write operations have an extra layer of cache (there is additional delay), but it ensures consistency of cached data (avoiding cache becoming old). At this time, cache is like a database proxy, both read and write go through cache, then cache queries database or synchronizes write operations to database

Write-behind/Write-back

Write-back cache is very similar to direct write, write operations also need to go through cache first, the only difference is asynchronous write to database, thereby allowing batch processing and write operation merging

Can also be used in combination with direct read cache, and there is no performance problem of write operations in direct write, but only guarantees eventual consistency

Write-around

So-called bypass write cache means write operations do not go through (bypass) cache, the application writes directly to database, only cache read operations. Can be used in combination with reserved cache or direct read cache:

Refresh-ahead

Refresh in advance, before cache expires, automatically refresh (reload) recently accessed entries. Can even reduce latency through preloading, but if prediction is inaccurate it may instead lead to performance degradation

4. What to Do When Full?

Of course, cache space is extremely limited, so there must also be an Eviction Policy, remove some entries that are unlikely to be used from cache, common strategies are as follows:

-

LRU (Least Recently Used): One of the most commonly used strategies, according to the locality principle of program runtime, within a period of time, there is a high probability of accessing the same data, so remove data that hasn't been used recently, for example, booking airline tickets, within a period of time there is a high probability of querying the same route

-

LFU (Least Frequently Used): According to usage frequency, remove the least frequently used data, for example, input methods mostly associate based on word frequency

-

MRU (Most Recently Used): In some scenarios, need to delete recently used entries, such as read, no longer remind, not interested, etc.

-

FIFO (First In, First Out): First in first out, remove earliest accessed data

These strategies can also be used in combination, such as LRU + LFU comprehensive consideration, depending on specific scenarios

No comments yet. Be the first to share your thoughts.