I. What is Scalability?

Scalability is the property of a system to handle a growing amount of work by adding resources to the system.

Scalability means being able to cope with increasing workload by adding resources to the system. There are two ways to add resources:

-

Vertical scaling: Improve single machine configuration, add memory, processor, hard disk and other hardware resources to a single machine. With enough budget, you can build a server with luxurious configuration

-

Horizontal scaling: Add machines, expanding from one to multiple in quantity, multiple servers form a topology structure. With enough budget, you can own a server room, or even data centers around the world

For system design, scalability requires the system to be able to utilize more added resources (such as multi-core, multi-machine)

II. The Scalability Struggle Throughout System Design

A user request starts from the client, goes through network transmission, reaches the web service layer, then enters the application layer, and finally arrives at the data layer:

Corresponding to each logical layer in system design:

Each layer along the way has its specific scalability patterns:

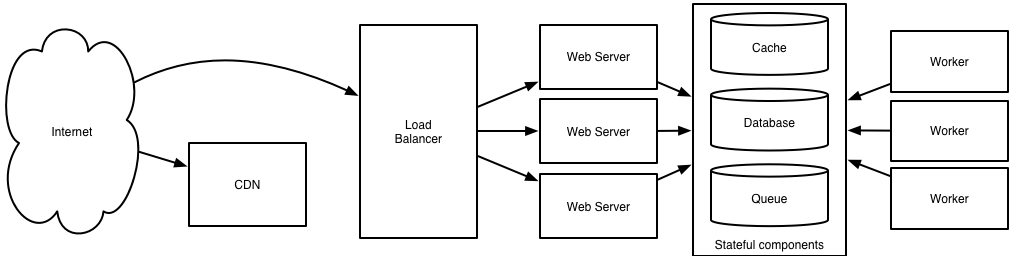

Finally forming such a complex system architecture:

Its operating mechanism is as follows:

-

Client queries DNS to get the IP address corresponding to the service, which may point to a load balancer before the web service, or CDN, providing static resources in object storage nearby

-

Requests flooding to the web service are distributed by the load balancer (such as reverse proxy) to corresponding web servers according to established strategies, entering the application layer

-

After requests reach the application layer, they go through service query mechanisms like Service Discovery, Service Mesh to find target microservices, and start processing requests

-

Data requests will eventually be transferred to the data layer through a series of read/write APIs, asynchronous operations will also enter message queues for queuing, but will eventually reach the data layer

-

Requests for hot data will be blocked by the cache layer before the database, the rest fall to the database, which may be SQL databases with sharding, denormalization optimization, and data consistency guaranteed by replication mechanism, or NoSQL databases with better query performance, or object storage

Among them, many patterns bring some new problems while solving some problems, therefore, in system design, from CAP choices to formulating distribution strategies, always constantly making trade-offs

III. Scalability Requirements in Commercial Context

At this point, the common scalability patterns in system design have been sorted out

Looking back at scalability:

Scalability means being able to cope with increasing workload by adding resources to the system

From the definition alone, as long as a system can utilize added resources and thus has the ability to undertake more workload, it is scalable, which is indeed the basic requirement of scalability

However, in actual scenarios (commercial context), we have put forward higher requirements for scalability:

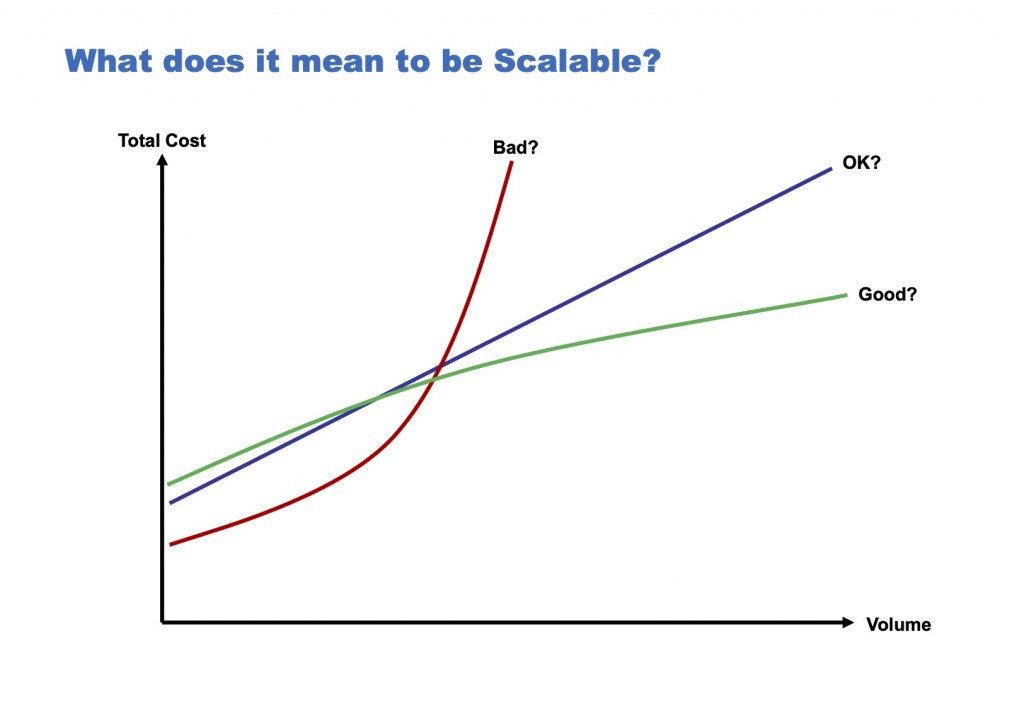

[caption id="attachment_2154" align="alignnone" width="625"] what does it mean to be scalable[/caption]

what does it mean to be scalable[/caption]

Many times, blindly adding resources can indeed solve problems, but for scalability, burning money is shameful:

Well, you can just throw hardware at the problem which is the thing that's thrown around our industry a lot. And I think it's a little bit of a shame, because it is just effectively like burning money.

That is to say, the system is required not only to utilize, but also to utilize added resources as efficiently as possible, not just rely on adding resources to achieve linear expansion

The measurement standard for utilization rate is usually cost per transaction (may be other similar business indicators in non-transaction systems):

Cost per transaction = Total cost / Transaction volume

Among them, costs are divided into fixed costs and variable costs:

-

Fixed costs: Preliminary work and infrastructure, amortization expenses, capital costs, operating costs, etc.

-

Variable costs: Whether it can be used on demand, whether there are bulk discounts, ensuring resource availability (maintenance management, etc.)

Therefore, in a commercial context, the question scalability needs to answer is, add 1 machine, how much more business volume can it support:

A typical question I would ask of anyone is if you add another node to your system how many more units of work or transactions or whatever do you get out of that.

Not only be sure that adding machines can cope with more workload, but also precisely know how much "more" is, thereby optimizing costs, striving for perfection

No comments yet. Be the first to share your thoughts.